Johannes von Oswald

I am a research scientist at Google in Zurich,

working with Blaise Agüera y Arcas and

João Sacramento in the

Paradigms of Intelligence Team.

Until 2023, I was a PhD student supervised by Angelika Steger and

João Sacramento at the Institute of Theoretical Computer Science, ETH Zurich.

My research focuses on how and what machines, in particular neural networks, learn from data. One important goal is to allow these learning algorithms to generalize broadly and solve novel complex tasks.

Therefore, I am heavily inspired by (meta-) learning within a large, possibly open-ended, enviroment.

Currently, I investigate how state-of-the-art neural network models, in particular transformer-based large language models, can move beyond pattern matching to learn and implement algorithmic-like solutions.

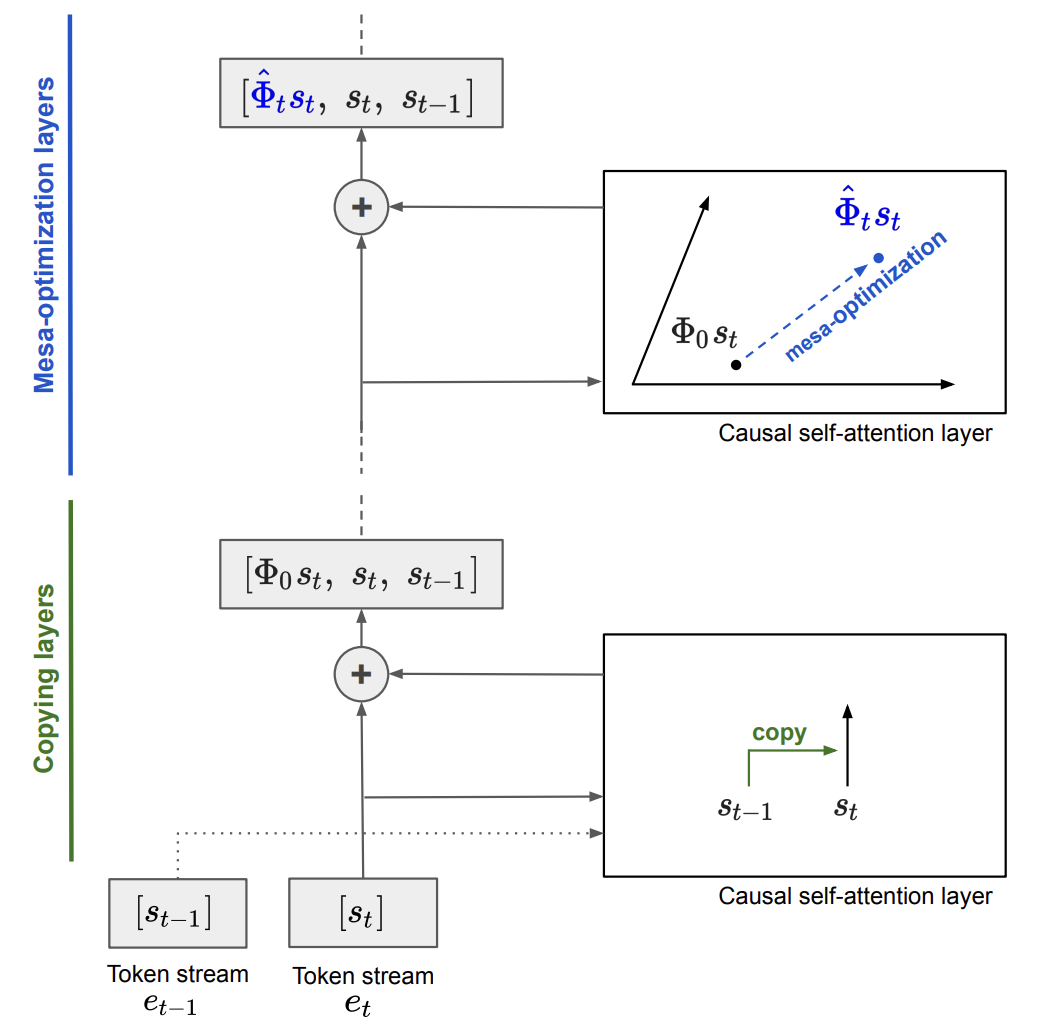

This led me to work on mesa-optimization: the emergence of optimization algorithms within neural networks!

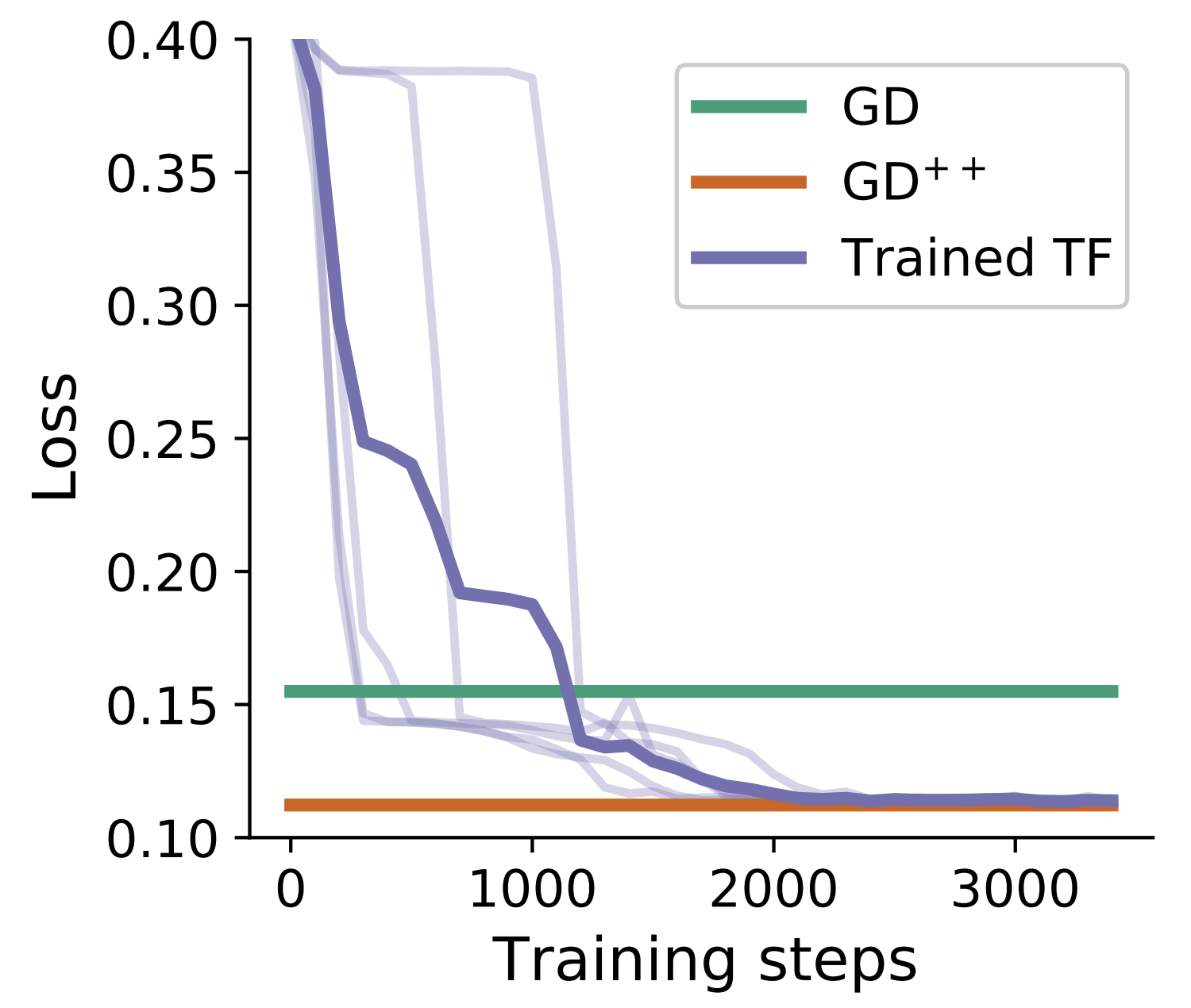

In recent work, we showed that gradient descent-based algorithms

can be implemented within the activations of transformers by simple autoregressive outer-optimization. This allows transformers to learn and

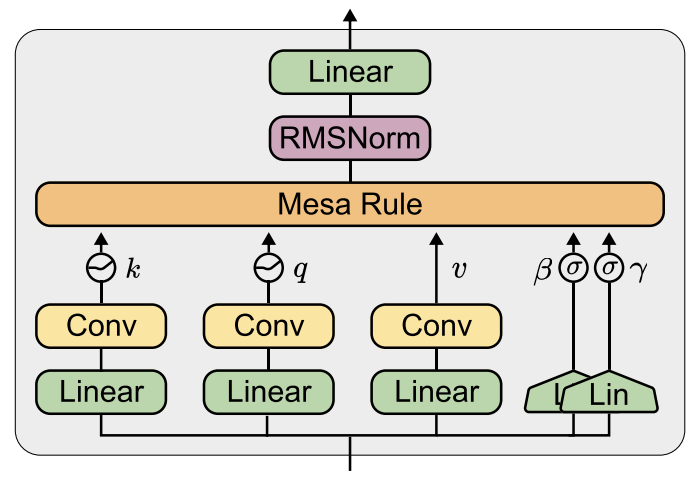

generalize at test time to novel data provided in-context. This led to the development of a novel recurrent neural

network architecture, the MesaNet, which we tested on language modeling at scale.

Recently, I became more intrigued by hardware, and I started working with Charlotte Frenkel on exciting ideas to run neural networks on the hardware substrates they deserve!

I am always interested in scientific collaborations, particularly in helping students with their research. If you want to collaborate with me and think that your research fits my interest, please reach out to me via one of the social media channels below or via email at jovoswald[at]gmail.com

News

- April, 2026 - I gave a talk about our mesa-optimization and mesa layer work at Tufa labs , an exciting new research lab in Zurich.

- December, 2025 - Tristan Torchet is joining Google PI as a student researcher and working with Charlotte and me on new exiting hardware ideas.

- October, 2025 - Marwa Abdulhai is joining Google PI as a student researcher and working with Nino and me on dynamic evaluations.

- October, 2025 - Our work on gradient descent within Transformers was discussed by Andrej Karpathy on the Dwarkesh Patel Podcast https://youtu.be/lXUZvyajciY?si=jeoFgabWE-IkMh1N!

- July, 2025 - Nino and me are hosting a student researcher for the end of this year at Google - please apply here .

- June, 2025 - Nino and me presented the MesaNet at the ASAP seminar. Please see the slides here.

- June, 2025 - João gave a talk at the Kempner Institute at Harvard University about our work on mesa-optimization and the MesaNet.

- April, 2025 - Yassir gave an excellent oral presentation of our work at ICLR 2025 on how to learn randomized algorithms within transformers.

- March, 2024 - Our work on gradient descent within Transformers was discussed by Sholto Douglas & Trenton Bricken on the Dwarkesh Patel Podcast https://youtu.be/UTuuTTnjxMQ?si=6HV5ahGM3YXY1K50&t=264!

- December, 2023 - I succesfully defended my PhD thesis! I am particularly happy about the front and back cover :).

- December, 2023 - I gave a short talk at Alignment Workshop in New Orleans about our mechanistic interpretability work of in-context learning.

Research Highlights

-

MesaNet: Sequence Modeling by Locally Optimal Test-Time Training

Johannes von Oswald*, Nino Scherrer*, Seijin Kobayashi, Luca Versari, Songlin Yang, Maximilian Schlegel, Kaitlin Maile, Yanick Schimpf, Oliver Sieberling, Alexander Meulemans, Rif A. Saurous, Guillaume Lajoie, Charlotte Frenkel, Razvan Pascanu, Blaise Agüera y Arcas and João Sacramento

ArXiv 2025

Paper Code Colab Tutorial

-

Uncovering Mesa-Optimization Algorithms in Transformers

Johannes von Oswald*, Maximilian Schlegel*, Alexander Meulemans, Seijin Kobayashi, Eyvind Niklasson, Nicolas Zucchet, Nino Scherrer, Nolan Miller, Mark Sandler, Blaise Agüera y Arcas, Max Vladymyrov, Razvan Pascanu and João Sacramento

ICLR 2024, Workshop on Understanding of Foundation Models (Oral)

Paper Code

-

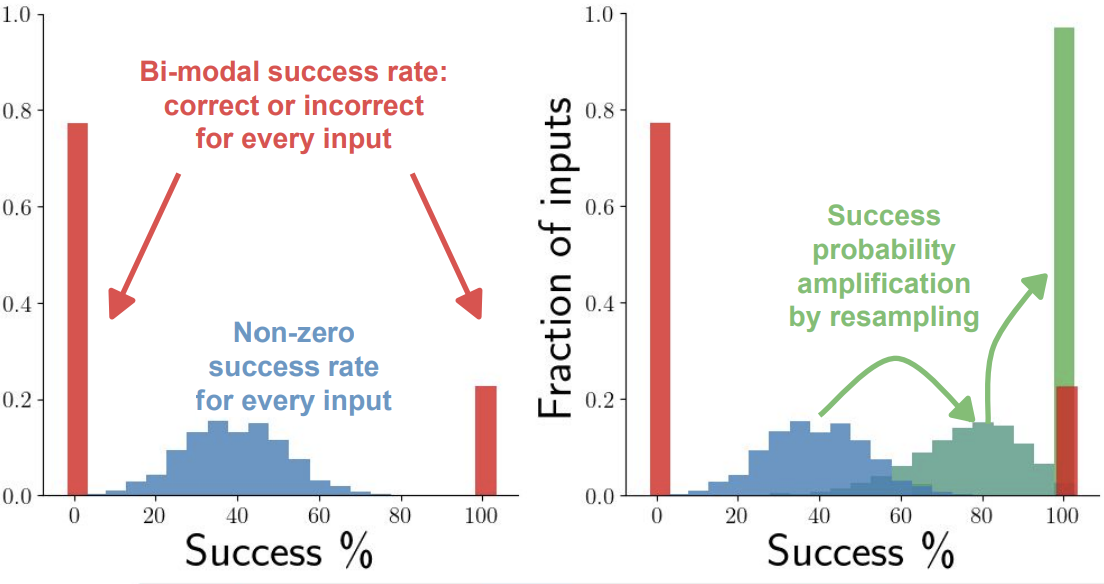

Learning Randomized Algorithms with Transformers

Johannes von Oswald*, Seijin Kobayashi*, Yassir Akram*, Angelika Steger

ICML 2025, Oral Presentation

Paper

-

Weight decay induces low-rank attention layers

Seijin Kobayashi, Yassir Akram, Johannes von Oswald

NeurIPS 2024

Paper

-

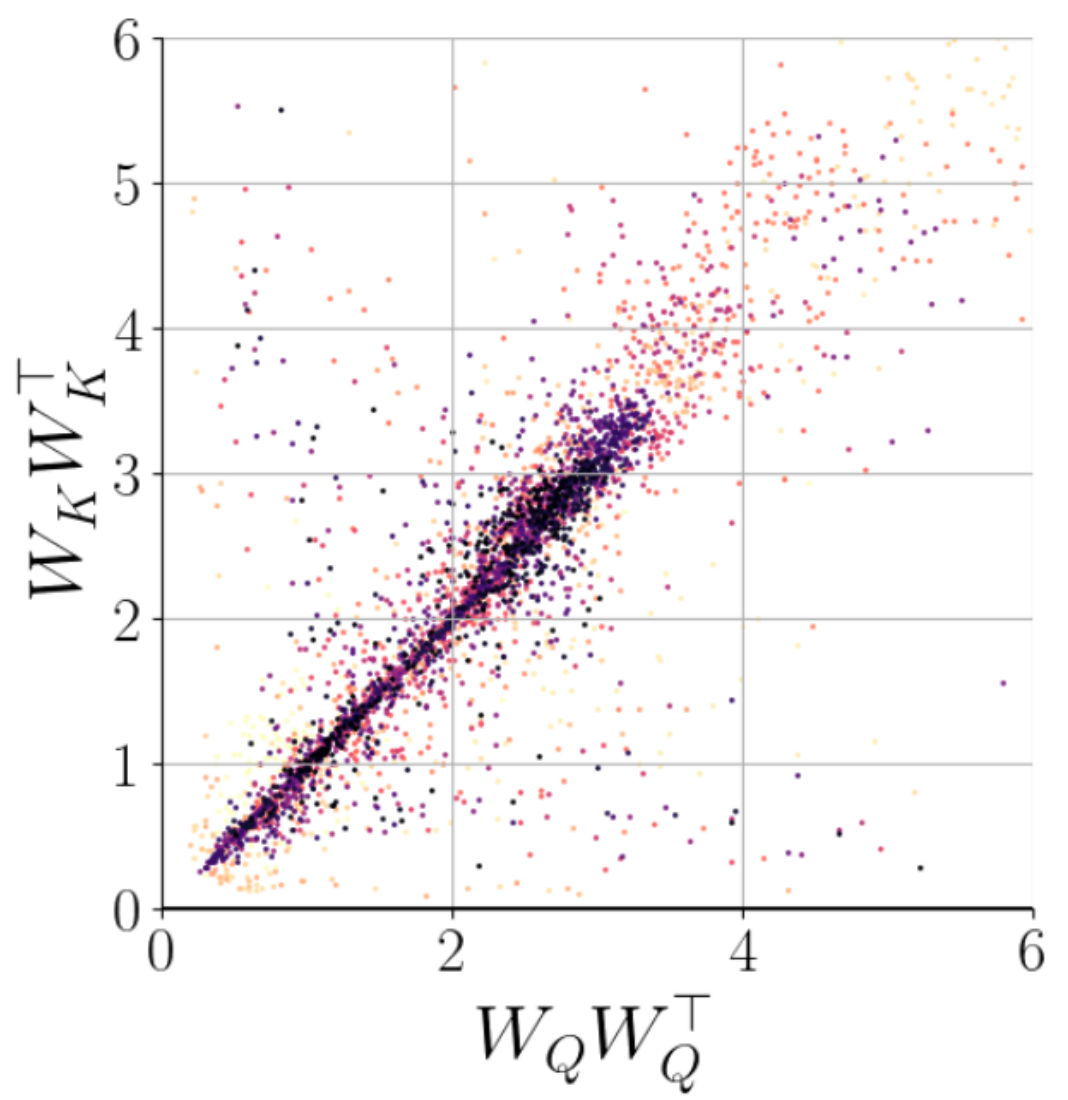

Transformers learn in-context by gradient descent

Johannes von Oswald, Eyvind Niklasson, Ettore Randazzo, João Sacramento, Alexander Mordvintsev, Andrey Zhmoginov, Max Vladymyrov

ICML 2023, Oral Presentation

Paper Code